Understanding Your Score

TruthVouch assessments automatically calculate dimension-based scores that measure your organization’s AI maturity across governance, technical, compliance, and operational areas. Learn how scores are calculated and what they mean.

Overall Score

Your Overall AI Maturity Score is a weighted average (0-100) of four core dimensions:

| Dimension | Weight | What It Measures |

|---|---|---|

| Governance & Strategy | 35% | Leadership oversight, policies, risk frameworks |

| Technical Readiness | 30% | Detection capabilities, monitoring, data quality |

| Compliance & Ethics | 25% | Regulatory readiness, bias mitigation, transparency |

| Operations & Culture | 10% | Team skills, incident response, change management |

Example: Overall Score = (Governance 80 × 0.35) + (Technical 70 × 0.30) + (Compliance 85 × 0.25) + (Operations 60 × 0.10) = 74.5

Dimension Scoring

Each dimension breaks down into 3-5 sub-categories, each scored 0-100:

Governance & Strategy (35%)

1. AI Governance Framework (25%)

- Board/executive visibility and oversight

- Formal AI governance policy (documented)

- Risk assessment process for AI investments

- Responsibility matrix (who approves AI projects?)

2. Strategy & Planning (20%)

- AI strategy aligned with business goals

- Budget allocation for AI governance

- Vendor evaluation and selection process

- Lifecycle planning for AI systems

3. Risk Management (20%)

- Risk identification methodology

- Mitigation strategies documented

- Incident response procedures

- Regular risk reviews

4. Vendor & Third-Party Management (35%)

- Vendor security/compliance requirements

- Contract terms covering governance

- Regular vendor audits

- Data protection agreements (DPAs)

Technical Readiness (30%)

1. Detection & Monitoring (35%)

- Hallucination detection capability (scoring 0 = manual only, 100 = continuous automated)

- Cross-check automation for your knowledge base

- Real-time monitoring of AI outputs

- Integration with AI systems (SDKs, APIs, or manual review)

2. Data Quality & Governance (25%)

- Truth Nugget coverage (% of critical facts documented)

- Data validation processes

- Source tracking and citation

- Version control and audit trails

3. System Architecture (25%)

- Observation points for AI outputs

- Data pipeline security

- Backup and recovery procedures

- API/SDK integration maturity

4. Observability & Logging (15%)

- Centralized logging of all AI interactions

- Query traceability

- Response attribution

- Retention policies

Compliance & Ethics (25%)

1. Regulatory Readiness (35%)

- Industry compliance frameworks mapped (SOX, HIPAA, GDPR, EU AI Act)

- Compliance checklist completion

- Third-party audit preparation

- Control documentation

2. Bias & Fairness Mitigation (25%)

- Bias detection methodology

- Fairness criteria defined

- Training data bias assessment

- Ongoing bias monitoring

3. Transparency & Explainability (25%)

- Disclosure: Does your org disclose AI usage to customers?

- Explainability: Can you explain AI decision-making?

- Attribution: Can you cite sources for AI claims?

- Correction velocity: How quickly do you fix errors?

4. Data Privacy & Protection (15%)

- Privacy impact assessments

- Data minimization practices

- User consent processes

- Encryption and access controls

Operations & Culture (10%)

1. Team Skills & Training (40%)

- AI expertise on staff (data scientists, engineers, governance specialists)

- Training programs for non-technical staff

- Certification/credential programs

- External advisor/consultant engagement

2. Incident Response & Learning (35%)

- Incident classification and escalation

- Root cause analysis process

- Corrective action tracking

- Communication protocols

3. Cross-Functional Alignment (25%)

- Executive + legal + technical alignment

- Regular governance review meetings

- Communication cadence

- Role clarity across departments

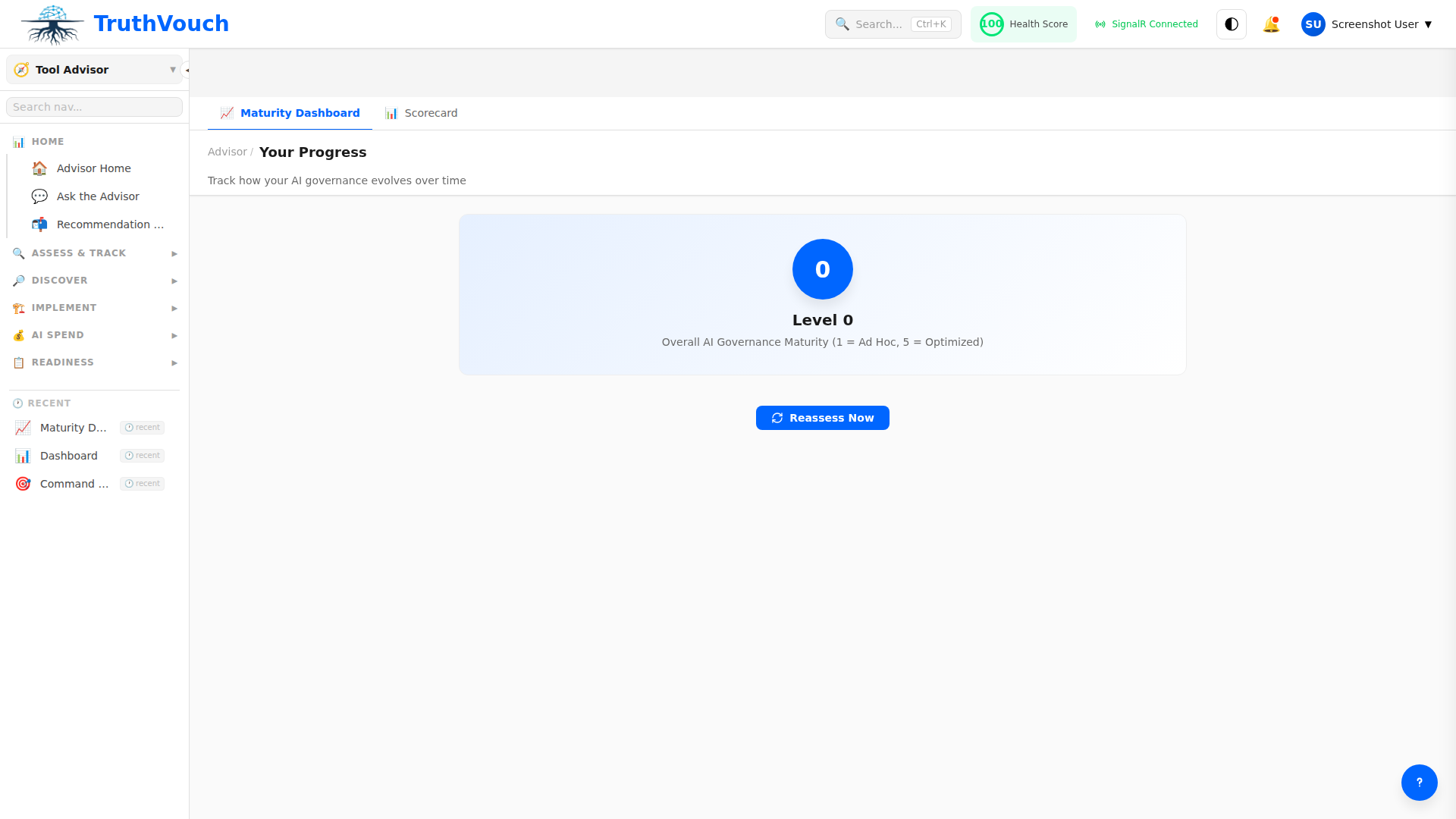

Score Interpretation

Maturity Levels

| Score Range | Level | What It Means |

|---|---|---|

| 80-100 | Mature | Best-in-class governance; comprehensive controls |

| 60-79 | Developed | Strong foundation; targeted improvements needed |

| 40-59 | Emerging | Basic processes; significant gaps remain |

| 20-39 | Initial | Early stage; foundational work required |

| 0-19 | Ad-hoc | Little to no formal governance |

Red Flags (Score < 40)

Scores below 40 in any dimension indicate:

- Governance < 40: No formal AI policy; significant compliance risk

- Technical < 40: Limited monitoring; hallucinations likely undetected

- Compliance < 40: Regulatory risk; unprepared for audits

- Operations < 40: Team gaps; slow incident response

Benchmarking

Your scores are compared against:

- Industry peers (Finance, Healthcare, Tech, etc.)

- Company size (1-10, 11-50, 51-250, 251-1000, 1000+)

- Geography (US, Europe, APAC)

See Benchmarks to understand your percentile ranking.

Scoring Methodology

Question Weighting

Each assessment question is weighted based on:

- Impact: How critical is this control? (Strategic questions weighted higher)

- Frequency: How often is this capability needed?

- Precedent: Industry-standard practices

Example: “Does your organization have a formal AI governance policy?” is worth more than “Do you track AI spend monthly?”

Scoring Algorithm

- Raw Score: Questions answered 0 (No), 0.5 (Partial), 1 (Yes)

- Category Score: Average of all questions in a category × 100

- Dimension Score: Weighted average of categories × 100

- Overall Score: Weighted average of dimensions × 100

Partial Credit

Many questions allow partial credit (0.5) for:

- “We have a draft policy” (not yet formalized)

- “We monitor some AI systems” (not all)

- “We review annually” (not continuously)

This encourages granular assessment rather than binary yes/no.

Confidence Scoring

Each dimension includes a Confidence Score (0-100):

- 90+: High confidence; limited information gaps

- 70-89: Moderate confidence; minor clarifications needed

- 50-69: Lower confidence; some detailed follow-up recommended

- <50: Low confidence; suggest detailed audit or consultant review

Low confidence = You may want external validation before acting on results.

Score Stability

Assessment scores are relatively stable but can fluctuate:

Month-to-Month Variation

- ±5 points: Natural variance from question interpretation

- ±10 points: Significant change; investigate what changed

Seasonal Patterns

- Scores may dip after compliance events (new regulations)

- Improve after training programs are completed

- Vary with team turnover

Improvement Tracking

Historical Comparison

After your second assessment (minimum 30 days later):

- See dimension-by-dimension progress

- Understand which improvements moved the needle

- Track overall maturity trajectory

Projected Trajectory

Based on your improvement rate:

- “At current pace, you’ll reach 80 Governance by Q3 2024”

- “You’ve plateaued at 65 Technical — likely need external help”

- “Fastest improvement: Operations (+8 points/quarter)“

Customization (Enterprise)

Enterprise customers can:

- Reweight dimensions (e.g., Compliance = 40% instead of 25%)

- Add custom questions (company-specific concerns)

- Adjust scoring rubrics (align with internal standards)

Contact your CSM to customize scoring.

Related Topics

- Taking an Assessment — Step-by-step walkthrough

- Benchmarks — How you compare to peers

- Improvement Planning — Actions based on your scores

Next Steps

- Review your dimension scores — which area is weakest?

- Compare to benchmarks — where are you vs. peers?

- Read improvement recommendations — prioritize the top 3 gaps

- Plan actions — assign owners and timelines

- Re-assess in 90 days — measure progress