Regulatory FAQ Bot

The Regulatory FAQ Bot is an AI-powered assistant that automatically answers compliance and governance questions in natural language. Ask about regulations, requirements, and best practices relevant to your jurisdiction and industry. Responses include source citations and cross-regulation mapping.

How It Works

-

Ask in plain English — No legal jargon required. Examples:

- “Do we need customer consent for AI-generated content?”

- “What does EU AI Act say about high-risk systems?”

- “Are we compliant with SOX if we use AI for financial reporting?”

- “What’s the difference between GDPR and CCPA on data retention?”

-

Get sourced answers automatically — Each response includes:

- Relevant regulation text (with section numbers, auto-cited)

- Interpretation and practical guidance (AI-generated)

- Links to official guidance documents

- Application to your organization (personalized)

-

Compare regulations — Ask the bot to map overlaps:

- “What overlaps between GDPR and HIPAA on data encryption?”

- “Do GDPR and CCPA require the same data retention period?”

Available Jurisdictions & Frameworks

Regulations

- EU: GDPR, EU AI Act, eIDAS, NIS 2, Digital Services Act

- US: Federal (FTC Act Section 5, HIPAA, SOX, GLBA), State (CCPA, CPRA, VCDPA)

- UK: UK AI Bill, UK GDPR

- Canada: PIPEDA, Bill C-27 (proposed)

- APAC: PDPA (Singapore), PIPL (China), APPI (Japan)

- Industry: HIPAA (Health), SOX (Finance), PCI DSS (Payments), ISO/IEC 42001 (AI Management)

Standards

- ISO/IEC 42001 — AI Management System

- NIST AI RMF — AI Risk Management Framework

- SEC AI Disclosure Guidance — Financial AI governance

Question Types

Policy Questions

- “What’s the regulatory status of GenAI in our jurisdiction?”

- “Do we need to disclose AI usage to customers?”

- “What’s required for AI audit trails?”

Compliance Gaps

- “Are we GDPR compliant if we use OpenAI’s API?”

- “Does our AI system need a Data Protection Impact Assessment (DPIA)?”

- “What controls does HIPAA require for AI in healthcare?”

Comparison Questions

- “What’s the difference between EU AI Act and NIST AI RMF?”

- “How do CCPA and GDPR differ on user rights?”

- “Which regulations require transparency in AI decision-making?”

Timeline Questions

- “When does EU AI Act take effect for high-risk systems?”

- “What’s the deadline for HIPAA AI compliance?”

Applicability Questions

- “Do we need to comply with EU AI Act if we’re US-based?”

- “Does GDPR apply to our company?”

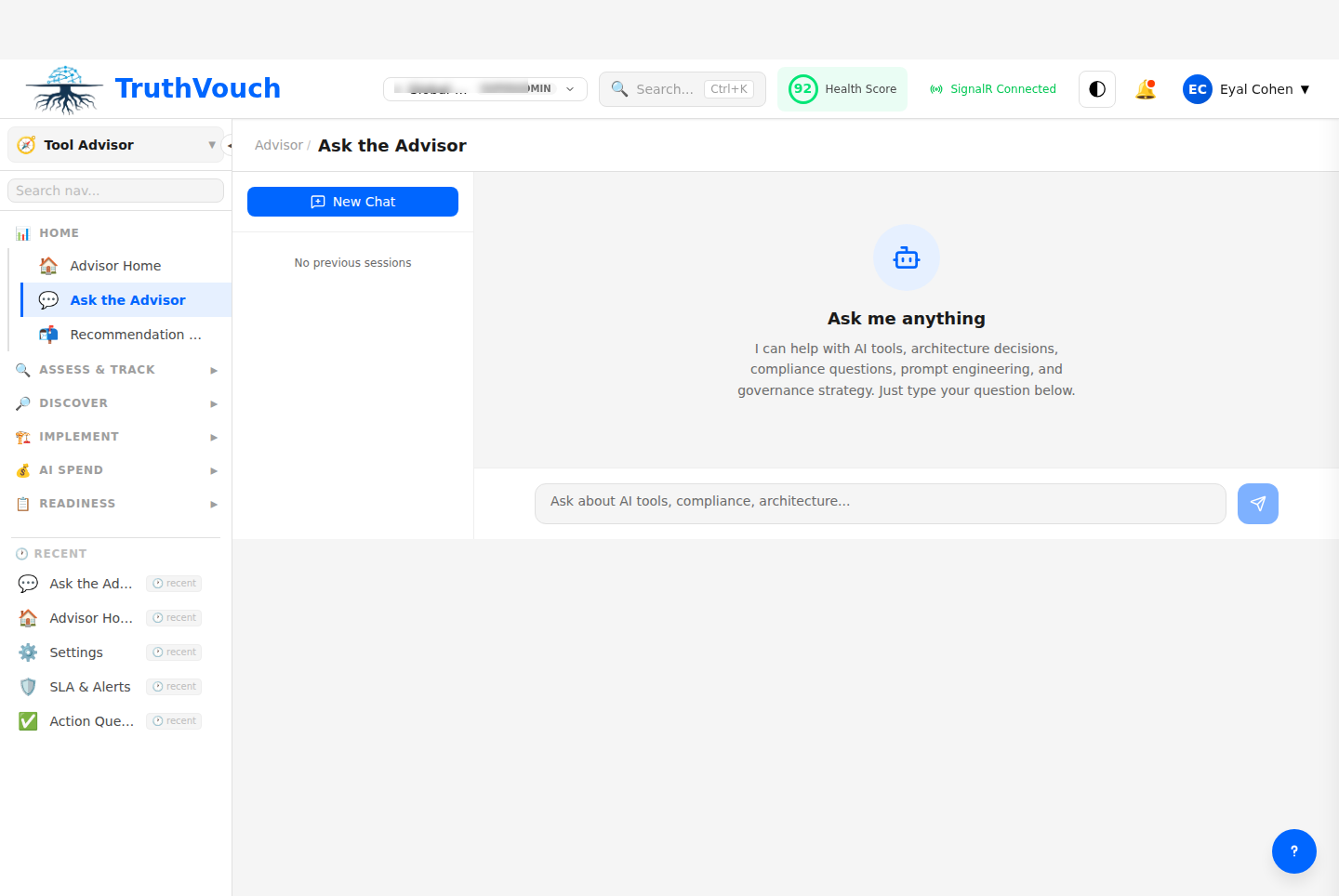

Using the Bot

Basic Usage

- Click Regulatory FAQ in Advisor

- Type your question in plain English

- Click Ask or press Enter

- Bot returns answer with sources

Search previous answers: Filter by keyword, regulation, or jurisdiction

Advanced Features

Filter by jurisdiction: Before asking, select your region

- Narrows answer scope

- Prioritizes applicable regulations

- Example: “Just US federal” or “EU + UK”

Include context: Add company info for more personalized answers

- Industry (Finance, Healthcare, Retail, Tech)

- Company size

- Data types you handle (PII, Health data, Financial data)

- Geographic scope

Save answers: Click Save to build a personal FAQ library

- Reference later

- Share with team

- Track as audit evidence

Interpreting Answers

Source Quality

Each answer is attributed:

- Official regulation text (highest authority) — Direct quote from law/regulation

- Official guidance (high authority) — Government or regulatory body interpretation

- Legal commentary (medium authority) — Lawyer/expert analysis

- Industry practice (informational) — What companies in your space typically do

Best practice: Use official regulation text and guidance for audits; reference practice for planning.

Scope Limitations

The bot answers questions about:

- What regulations require

- Timeline and deadlines

- Who regulations apply to

- Cross-regulation comparisons

The bot does NOT:

- Provide legal advice (consult your lawyer)

- Interpret for your specific situation (too many variables)

- Guarantee compliance (regulatory landscapes change)

- Substitute for professional legal review

Common Answers

GDPR Questions

Q: Do we need customer consent for AI processing? A: Under GDPR Article 6, you need a lawful basis (consent is one, but so is “legitimate interest”). AI-specific rules in EU AI Act apply to high-risk AI. Most analytics AI doesn’t require explicit consent if you’re transparent in privacy policy.

Q: What’s a DPIA and when do we need one? A: GDPR Article 35 requires a Data Protection Impact Assessment for “processing likely to result in high risk.” AI systems processing personal data typically require a DPIA. Template: document data flows, identify risks, mitigation controls.

EU AI Act Questions

Q: What makes an AI system “high-risk”? A: EU AI Act Annex III lists high-risk systems (biometrics, employment decisions, eligibility for credit/insurance, law enforcement). Most customer-facing AI is not high-risk. Compliance depends on your use case.

Q: When must we comply with EU AI Act? A: Phases:

- March 2024: Prohibited practices (social scoring, subliminal manipulation)

- Q3 2024: High-risk and transparency requirements

- 2026: Full enforcement

US GDPR/CCPA Questions

Q: What’s CCPA and how does it differ from GDPR? A: CCPA (California) is less stringent than GDPR (EU) but covers similar ground (consumer rights, data transparency). Key difference: CCPA requires opt-out (not opt-in) for data sales. Expanding state laws (CPRA, VCDPA) align more with GDPR.

Q: Do we need a Privacy Policy for AI? A: Yes. CCPA and GDPR require disclosure of data practices. For AI: explain what data you use, how you use it, who has access, and how long you retain it.

HIPAA (Health) Questions

Q: Can we use commercial AI APIs for patient data? A: Only if your vendor is a HIPAA Business Associate (BA). Most major vendors (OpenAI, Google, Anthropic) now offer BAAs. Requires Data Processing Agreement (DPA). You remain liable for vendor’s security.

Q: What’s required for audit trails in healthcare AI? A: HIPAA Security Rule requires logging: who accessed data, what they did, when. For AI systems: log all inference requests, predictions, and corrections. Retain for 6 years.

Using FAQ Answers in Your Governance

For Policy Development

- Ask bot: “What controls does [regulation] require for AI?”

- Use answer to draft your policy

- Share with Legal for review

- Reference bot’s sources in policy documentation

For Audit Preparation

- Ask: “What documentation does [regulation] require?”

- Collect required docs (DPIAs, risk assessments, etc.)

- Use bot answer as audit checklist

- Keep bot response as evidence you did due diligence

For Risk Assessment

- Ask: “Which regulations apply to my AI use case?”

- Ask: “What are the penalties for non-compliance?”

- Ask: “What are common violations in this space?”

- Prioritize controls based on risk/impact

Limitations & Disclaimers

The Regulatory FAQ Bot:

- Uses public regulatory text and expert knowledge

- May not reflect latest regulatory changes (updates quarterly)

- Should be validated by your legal team before relying on it for compliance

- Provides general information, not legal advice

- Interprets in good faith but may not cover all edge cases

Always consult a lawyer for:

- Specific legal advice for your situation

- Contracts and data processing agreements

- Litigation or disputes

- Novel regulatory interpretations

Related Topics

- Assessment — Assess your compliance readiness

- Vendor Evaluation — Evaluate vendor compliance requirements

- Blueprints — Reference blueprints include compliance requirements

Next Steps

- Identify your applicable regulations — Ask the bot: “What regulations apply to [my industry/jurisdiction]?”

- Map compliance gaps — Ask: “Are we compliant with [regulation]?”

- Set compliance targets — Create action items for high-risk gaps

- Build governance documentation — Use bot answers to draft policies

- Track implementation — Document controls in your governance system