Vendor Scoring

Vendor Scoring automatically evaluates LLM providers against standard criteria: security, compliance, features, cost, and support. Use pre-built scorecards to compare vendors or customize for your unique requirements.

Scoring Dimensions

TruthVouch automatically evaluates vendors across five core dimensions:

1. Security & Compliance (30%)

What’s evaluated:

- Data encryption (at rest and in transit)

- Access controls and authentication

- Audit logging and data residency options

- Security certifications (SOC 2, ISO 27001)

- Compliance frameworks (HIPAA, GDPR, SOX)

- Incident response and breach notification

- Third-party penetration testing

Scoring:

- 90+ = Comprehensive controls; multiple certifications; regular audits

- 70-89 = Strong baseline; major certifications; annual audits

- 50-69 = Adequate controls; basic certifications; some gaps

- <50 = Weak controls; no certifications; significant gaps

Examples:

- OpenAI: 82 (SOC 2, encryption, good practices but data US-only)

- Anthropic: 88 (SOC 2, HIPAA-ready, stronger privacy controls)

- Self-hosted: Varies (depends on your implementation)

2. Features & Capability (25%)

What’s evaluated:

- Model quality (accuracy on benchmarks like MMLU, HumanEval)

- Feature richness (vision, embeddings, function calling, etc.)

- Model availability (latest models, deprecated models support)

- Customization options (fine-tuning, RAG-ready, plugins)

- API completeness (chat, completions, embeddings, vision)

- Latency and throughput (p50, p95, p99 latencies)

Scoring:

- 90+ = Best-in-class performance; all major features; low latency

- 70-89 = Strong performance; most features; acceptable latency

- 50-69 = Adequate performance; basic features; higher latency

- <50 = Limited capability; missing key features; slow

Examples:

- GPT-4: 92 (excellent accuracy, comprehensive features, 2-5s latency)

- Claude Opus: 90 (excellent reasoning, good features, 2-8s latency)

- Gemini: 85 (good accuracy, growing features, 1-3s latency)

- Llama 2 (self-hosted): 75 (good for open-source, slower, requires tuning)

3. Cost Efficiency (20%)

What’s evaluated:

- Base API cost per 1M tokens

- Volume discounts available

- Infrastructure costs (if self-hosted)

- Total cost of ownership (training, support, tooling)

- Pricing transparency

- Cost predictability

Scoring:

- 90+ = <$1 per 1M tokens (self-hosted or very efficient)

- 70-89 = $1-$5 per 1M tokens (good value)

- 50-69 = $5-$15 per 1M tokens (premium pricing)

- <50 = >$15 per 1M tokens (expensive)

Examples:

- Self-hosted Llama 2: 95 (infrastructure cost only, ~$0.10/1M)

- Gemini Pro: 88 ($0.075 input / $0.30 output per 1M)

- Claude Opus: 70 ($15 per 1M tokens, premium)

- GPT-4: 65 ($30 per 1M tokens, very expensive)

4. Support & Reliability (15%)

What’s evaluated:

- Response time (SLA for support requests)

- Technical support quality (dedicated vs. community)

- Uptime guarantees (SLA %)

- Documentation quality

- Community size and maturity

- Vendor stability (financial health, customer base)

Scoring:

- 90+ = <1hr response, 99.95% SLA, dedicated support, excellent docs

- 70-89 = <4hr response, 99.9% SLA, good support, good docs

- 50-69 = <24hr response, 99% SLA, basic support, adequate docs

- <50 = No SLA, community support only, limited docs

Examples:

- OpenAI: 78 (good docs, <1hr support for enterprise, community strong)

- Anthropic: 82 (excellent docs, growing support, responsive team)

- Google Cloud AI: 80 (enterprise SLA available, good support)

- Open-source: 50 (community support only, no SLA)

5. Governance & Privacy (10%)

What’s evaluated:

- Data usage and retention policies

- Privacy commitments (no training on your data?)

- Terms of service clarity

- Contract terms and flexibility

- Transparency on algorithms

- Data residency options

Scoring:

- 90+ = Transparent; no data training; flexible contracts; multi-region

- 70-89 = Good terms; default no training; reasonable contracts

- 50-69 = Adequate terms; clarification needed; restrictive contracts

- <50 = Opaque terms; unclear data usage; inflexible

Examples:

- OpenAI: 75 (improved privacy terms, but US data residency default)

- Anthropic: 85 (strong privacy commitments, clearer terms)

- Google: 80 (regional options, GDPR-compliant)

Overall Score Calculation

Weighted Total = (Security × 0.30) + (Features × 0.25) + (Cost × 0.20) + (Support × 0.15) + (Governance × 0.10)

Example: Comparing Top 3 Vendors

| Vendor | Security | Features | Cost | Support | Governance | Weighted Total |

|---|---|---|---|---|---|---|

| OpenAI GPT-4 | 82 | 92 | 65 | 78 | 75 | 80.9 |

| Anthropic Claude 3 | 88 | 90 | 70 | 82 | 85 | 83.0 |

| Google Gemini Pro | 80 | 85 | 88 | 80 | 80 | 82.8 |

Interpretation:

- Claude 3 is strongest overall (83.0) — balanced across all dimensions

- OpenAI strong on features (92) but weak on cost (65)

- Google best on cost (88) but weaker on security

Vendor Scorecards

OpenAI

- Overall Score: 80.9

- Strengths: Best-in-class model quality (GPT-4), comprehensive API

- Weaknesses: Expensive, US data residency, less transparent on privacy

- Best for: Organizations wanting state-of-the-art performance

- Not ideal for: Cost-sensitive or privacy-first orgs

Anthropic Claude

- Overall Score: 83.0

- Strengths: Strong safety focus, good reasoning, transparent privacy

- Weaknesses: Slightly slower than GPT-4, premium pricing

- Best for: Orgs prioritizing safety and privacy

- Not ideal for: Cost-optimization focus

Google Gemini

- Overall Score: 82.8

- Strengths: Cost-efficient, good multimodal (vision), regional options

- Weaknesses: Younger ecosystem, smaller community, less proven accuracy

- Best for: Organizations on Google Cloud, cost-conscious

- Not ideal for: Vision-critical tasks requiring maximum accuracy

Self-Hosted (Llama 2 or Mistral)

- Overall Score: 72 (highly variable; depends on your implementation)

- Strengths: Lowest cost, full control, no data sharing

- Weaknesses: Requires infrastructure expertise, lower quality, limited support

- Best for: High-volume, cost-sensitive, compliance-critical workloads

- Not ideal for: Organizations without DevOps expertise

Using Scores for Decisions

Tier 1: Best Overall (Score 85+)

Use for production systems where quality matters. Higher cost acceptable.

Tier 2: Strong (Score 80-84)

Good balance of cost, capability, and support. Suitable for most workloads.

Tier 3: Adequate (Score 75-79)

Good for non-critical, cost-sensitive workloads. Trade-offs on quality or support.

Tier 4: Limited (Score <75)

Consider only for specific use cases where they excel (e.g., cost optimization).

Customizing Vendor Scores

Default weights (30% security, 25% features, 20% cost, 15% support, 10% governance) suit most orgs. Customize if your priorities differ:

For Finance/Compliance-heavy orgs:

- Security: 40%, Governance: 20%, Cost: 15%, Features: 20%, Support: 5%

- Prioritizes compliance and security over cost

For Cost-sensitive startups:

- Cost: 40%, Features: 30%, Security: 20%, Support: 5%, Governance: 5%

- Prioritizes performance and cost; accepts lower support tier

For Safety-critical (healthcare, aviation):

- Security: 35%, Governance: 25%, Features: 25%, Support: 10%, Cost: 5%

- Prioritizes safety and compliance; cost is secondary

See Custom Criteria to create your own weighting.

Updating Scores

Vendor scores are updated quarterly as:

- New models/features release

- Pricing changes

- Security/compliance certifications change

- Performance benchmarks evolve

- Customer reviews and feedback accumulate

Each score includes a last updated date; historical versions available.

Related Topics

- Custom Criteria — Create your own vendor evaluation

- Vendor Templates — RFP and scorecard templates

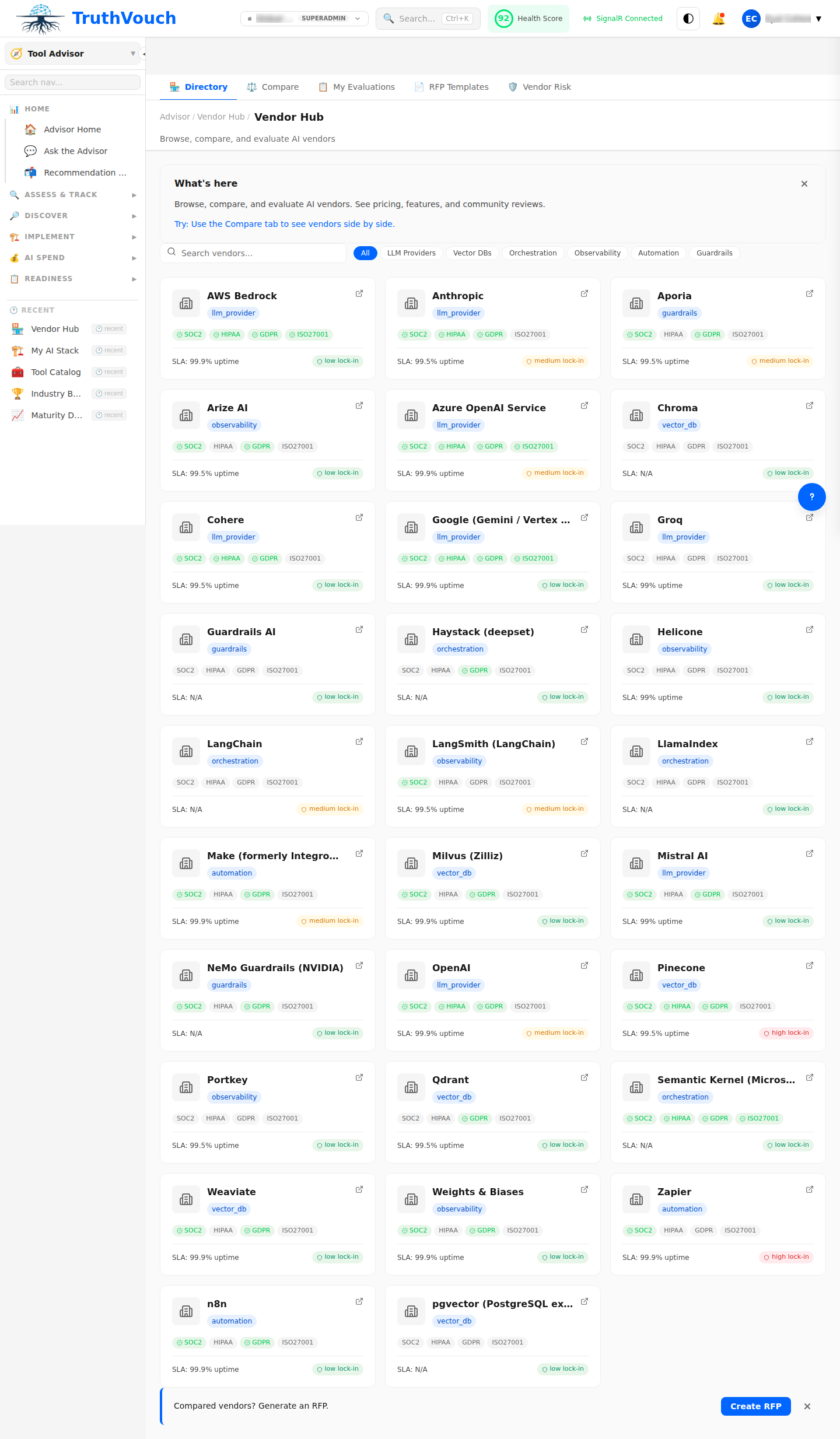

- Tools - Comparison — Side-by-side vendor comparison

Next Steps

- Review vendor scores for your use case

- Identify top 2-3 candidates (closest match to your priorities)

- Request trial access from finalist vendors

- Customize scoring if your needs differ from defaults

- Run cost analysis for finalists (usage projections)

- Present to stakeholders with recommendation