EU AI Act Risk Classification

The EU AI Act requires classifying AI systems into four risk levels: Unacceptable, High-Risk, Limited-Risk, and Minimal-Risk. Each level has different compliance requirements. TruthVouch automatically classifies based on system characteristics, then lets you override if needed.

The Four Risk Levels

Unacceptable Risk (Prohibited)

Systems that pose unacceptable risk and cannot be deployed in the EU.

Prohibited Practices:

- Social scoring by public authorities

- Subliminal manipulation

- Exploitation of vulnerabilities (targeting children or disabled)

- Real-time biometric ID in public spaces (with limited exceptions)

TruthVouch Action: If system classified as Unacceptable, DO NOT DEPLOY. Must redesign to remove prohibited practices.

High-Risk

Systems that require full compliance — risk assessments, documentation, testing, audit trails, human oversight.

Triggers (any of these):

| Trigger | Why | Example |

|---|---|---|

| Autonomous decisions affecting legal rights | Decisions without human appeal | Loan denial, hiring rejection, benefits eligibility |

| Biometric systems | Privacy & identification risk | Facial recognition, fingerprint, iris scan, voice recognition |

| Critical infrastructure | Safety-critical systems | Power grid, water treatment, transportation control |

| Education & training | Fairness & opportunity risk | Student grading, course recommendations, school admissions |

| Employment | Discrimination & fairness risk | Resume screening, performance evaluation, scheduling |

| Pricing & financial services | Economic harm risk | Insurance quotes, credit decisions, lending |

| Large-scale personal data + automated decisions | Scale of impact | Profiling 10K+ people, automated targeting |

| Real-time biometric ID (with exceptions) | Privacy & identification | Law enforcement real-time facial recognition (under strict conditions) |

| Content moderation at scale | Rights impact | Flagging illegal content affecting 100K+ users |

Compliance Requirements (Annex III & IV):

- Risk assessment document

- Technical documentation (Annex IV)

- Bias and fairness testing

- Audit trail implementation

- Human oversight processes

- Post-deployment monitoring

- Incident reporting procedure

Limited-Risk

Systems that require transparency disclosures to users.

Triggers:

- AI that interacts with humans or makes decisions affecting them

- Not autonomous (human review involved)

- Not high-risk data or scope

Examples:

- Chatbots (must disclose AI-generated)

- Content recommendation

- Content moderation (human-in-the-loop)

- Language generation (disclosure required)

Compliance Requirements:

- Transparent disclosure to users that AI is involved

- Clear explanation of what AI does

- User can opt for human alternative (if available)

Minimal-Risk

Systems with no requirements — low risk to individuals or society.

Examples:

- Spell checkers

- Grammar correction

- Search result ranking (non-personalized)

- Email spam filters

- Syntax highlighters

- Accessibility features

Auto-Classification Algorithm

Compliance AI follows EU AI Act Annex I logic:

IF prohibited_practice THEN UnacceptableELSE IF (autonomous_decision AND legal_effect) THEN High-RiskELSE IF (biometric_system) THEN High-RiskELSE IF (critical_infrastructure) THEN High-RiskELSE IF (personal_decisions + scale > threshold) THEN High-RiskELSE IF (no_autonomous AND transparency_disclosed) THEN Limited-RiskELSE IF (minimal_risk_indicator) THEN Minimal-RiskELSE Limited-Risk (default)Factors considered:

- System type (LLM, biometric, recommender, etc.)

- Decision scope (autonomous vs. assisted vs. informational)

- Data sensitivity (special categories, scale)

- Jurisdiction (EU trigger)

- User/subject population (children, vulnerable groups)

- Legal/economic impact (binding decisions, financial)

How to Override Classification

If you disagree with auto-classification:

- Go to Registry > [System Name] > Risk Classification

- Click Override

- Select new risk level

- Explain reasoning in comments

- Save

Example override:

- Auto-classified: High-Risk (loan approval recommendation)

- Your override: Limited-Risk

- Reason: “Bank review all loans autonomously; AI is informational only. All decisions made by human loan officer. No autonomous decisions.”

Compliance AI logs all overrides for audit trail.

Risk Level Impact on Compliance

| Requirement | Unacceptable | High-Risk | Limited-Risk | Minimal-Risk |

|---|---|---|---|---|

| Deployment in EU | Prohibited | Allowed with compliance | Allowed with disclosure | Allowed |

| Risk Assessment | N/A | Required | Optional | No |

| Technical Documentation | N/A | Required (Annex IV) | No | No |

| Bias Testing | N/A | Required | Recommended | No |

| Audit Trail | N/A | Required | Recommended | No |

| Human Oversight | N/A | Required | Recommended | No |

| Post-Deployment Monitoring | N/A | Required | Recommended | No |

| User Notification | N/A | Yes (“high-risk AI”) | Yes (“AI-generated”) | No |

| Incident Reporting | N/A | Within 72 hours (Article 73) | No | No |

Risk Classification Workflow

- New system created → Auto-classified based on profile

- Classification displayed → System details show risk level with reasoning

- Team reviews → Confirm or override classification

- Scans adjust requirements → High-risk scans include all requirements; limited-risk only check transparency

- Annual review → Re-classify if system changes (scope, data, deployment)

Common Classification Examples

Example 1: Customer Service Chatbot

System: AI chatbot answers customer questions on website

- Auto-inputs: LLM, customer data, real-time interaction, no autonomous decisions

- Auto-classification: Limited-Risk

- Reasoning: User knows it’s AI (chatbot interface), human support available, no binding decisions, no special data

- Requirements: Disclose “This is an AI chatbot” in UI, offer “speak with human” option

Example 2: Hiring Resume Screener

System: ML model filters resumes, recommends candidates to humans

- Auto-inputs: Decision-making, employment impact, training data could have bias

- Auto-classification: High-Risk

- Reasoning: Employment decisions under EU AI Act Annex I; significant impact on individuals; potential for discrimination

- Requirements: Full compliance (risk assessment, bias testing, audit trail, human review, monitoring)

Example 3: Loan Approval System (Autonomous)

System: AI autonomously approves/denies loans under €50K

- Auto-inputs: Autonomous decision, legal effect (binding), financial impact, potential discrimination

- Auto-classification: Unacceptable or High-Risk (EU AI Act doesn’t auto-approve loans, so High-Risk)

- Reasoning: Article 4 high-risk criteria triggered

- Requirements: Cannot be deployed in EU unless redesigned (add human review for all decisions)

Example 4: Email Spam Filter

System: ML model classifies emails as spam or legitimate

- Auto-inputs: No personal decisions, automated utility, no impact on individuals

- Auto-classification: Minimal-Risk

- Reasoning: No legal/economic impact, not a decision system, widely accepted utility

- Requirements: None

Annual Re-Classification

Systems should be re-classified if they change:

- Scope of deployment — Now serving EU users (changes jurisdiction)

- System capabilities — Now autonomous (was assisted before)

- Data processing — Now processes health data (was not before)

- User population — Now used in hiring (was internal only)

Compliance AI flags systems needing re-classification annually.

Regulatory Transition

August 2, 2025: Prohibited practices banned (immediate) August 2, 2026: Full EU AI Act compliance required

If your system is High-Risk and in the EU, target August 2026 for full compliance. Start now.

Next Steps

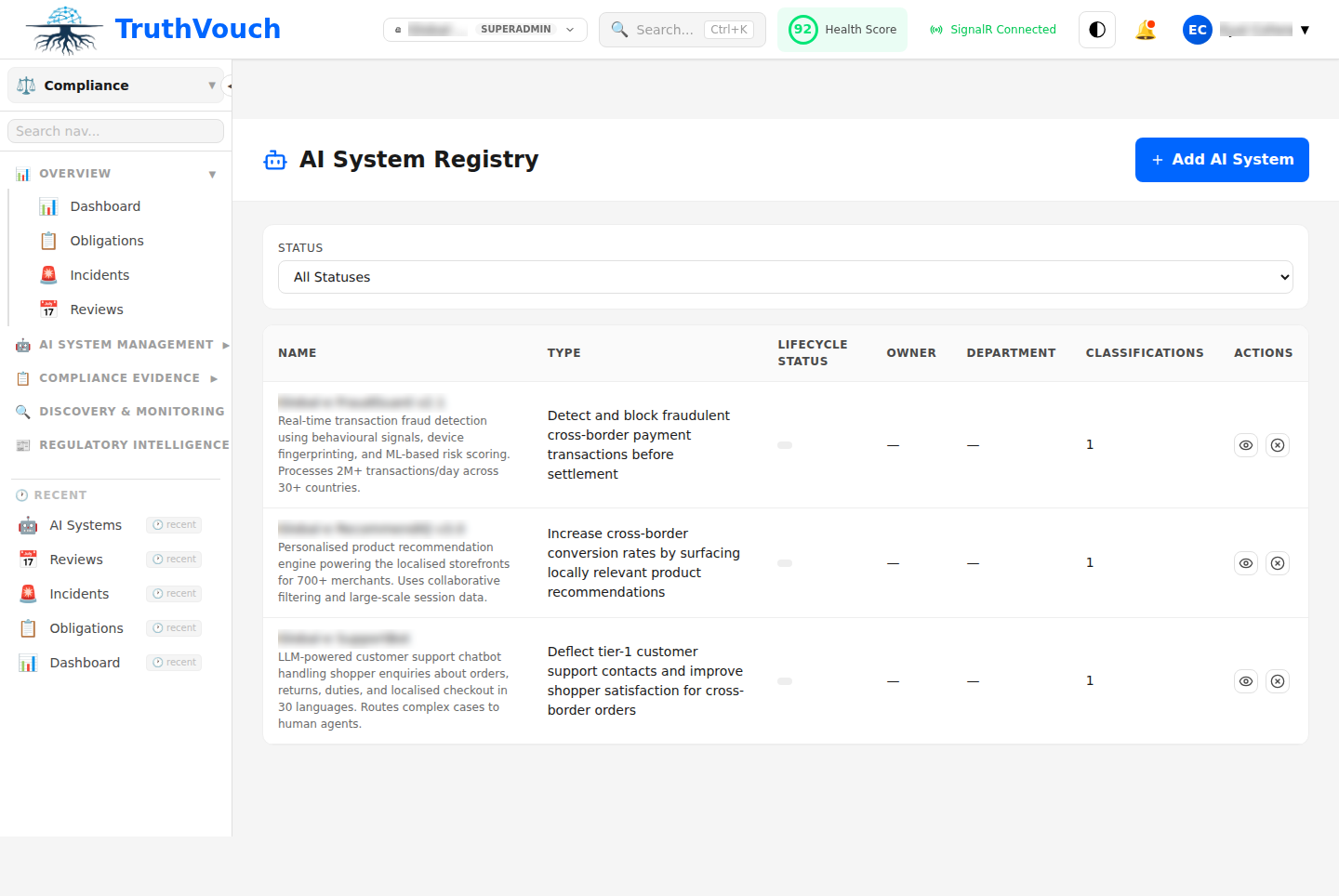

- View your system’s classification: Go to Registry > [System Name]

- Override if needed: Click “Risk Classification” tab

- Understand requirements: See EU AI Act Framework

- Prepare for compliance: Run a scan using Running Scans