Agent Types

The Agent Inventory in TruthOps tracks all autonomous AI agents in your organization. Register agents, classify by risk level, assign owners, and monitor health and performance.

What Is an Agent?

An agent is an autonomous AI system that makes decisions or takes actions with minimal human oversight:

Examples:

- Customer service chatbot that responds to inquiries

- Autonomous trading system that makes financial decisions

- Email filtering/triage system that routes incoming messages

- Hiring screening tool that evaluates job applications

- Risk assessment system that approves/denies loans or insurance

Not an agent:

- Chatbot with 100% human approval (fully supervised)

- Chat interface (users control every interaction)

- Report generation tool (provides info; doesn’t decide)

Registering an Agent

Basic Information

- Agent name: Descriptive identifier (e.g., “Customer Support Bot v2.1”)

- Description: What does it do? (1-2 sentences)

- Type: Classification (Customer Service, Hiring, Finance, Moderation, etc.)

- LLM provider: Which LLM powers this? (OpenAI, Anthropic, Custom, etc.)

- Model: Specific model (GPT-4, Claude Opus, etc.)

- Owner: Team responsible for this agent

- Status: Active / Paused / Archived

Metadata

- Environment: Production / Staging / Development

- Launch date: When did this agent go live?

- Users affected: Number of users this agent impacts

- Decisions per day: Expected volume

- Cost: Monthly LLM API cost (optional)

Risk Classification

Agents are classified by autonomy level and impact:

| Level | Description | Example |

|---|---|---|

| Critical | High autonomy; high-impact decisions | Loan approval, hiring screening, medical diagnosis |

| High | Medium-high autonomy; significant impact | Customer support (deflection 50%+), content moderation |

| Medium | Medium autonomy; moderate impact | Email classification, FAQ answering, suggestions |

| Low | Low autonomy; minimal impact; easy to reverse | Product recommendations, content summarization |

Classification rules:

CRITICAL if ANY of: - Affects financial decisions (>$100 impact per decision) - Affects health/safety decisions - Affects hiring/employment decisions - Affects loan/credit decisions - Regulator requires human approval (e.g., financial services) - Agent autonomy > 80% (humans override <20%)

HIGH if ANY of: - Affects customer-facing decisions (brand damage if wrong) - Agent autonomy 50-80% - Affects >10K users - Volume >1000 decisions/day

MEDIUM if: - Primarily informational - Agent autonomy 20-50% - Affects <10K users - Easy to reverse (user can ignore recommendation)

LOW if: - Minimal user impact - Agent autonomy <20% - Very easy to override - Recommendation-only (no enforcement)Agent Metadata

Owner & Stakeholders

- Primary owner: Single person responsible for agent (usually Product/Engineering lead)

- Secondary stakeholders: Who else cares? (Security, Compliance, Finance, etc.)

- Escalation path: Who to contact if problems arise?

SLA & Performance

- Expected accuracy: Target accuracy % (e.g., 95%)

- Acceptable error rate: What’s tolerable? (e.g., <2% false positive on customer routing)

- Response SLA: How fast must agent respond? (e.g., <5 sec for customer support)

- Uptime SLA: Availability requirement (e.g., 99.9%)

Data & Privacy

- Data handled: What data does agent process? (customer names, emails, conversation history, etc.)

- Data residency: Where is data stored? (US, EU, customer-specific)

- PII handling: Does it process personally identifiable information?

- Retention: How long is data kept? (24 hours, 30 days, indefinitely)

- Compliance scope: Which regulations apply? (GDPR, HIPAA, SOX, etc.)

Integration & Architecture

- Input source: Where does agent get input? (APIs, databases, user interfaces)

- Output channel: Where does agent send output? (Email, chat, database, API)

- Dependencies: What systems must be working? (LLM API, knowledge base, user DB, etc.)

- Fallback behavior: What happens if agent fails? (Default response, escalate to human, disable)

Agent Types

1. Customer Service Agents

Purpose: Handle customer inquiries; deflect or escalate to humans

Characteristics:

- High volume (100-10K queries/day)

- Customer-facing (brand-critical)

- Deflection target: 40-60%

- Escalation to humans for complex/sensitive issues

Key Metrics:

- Deflection rate (% resolved without escalation)

- Customer satisfaction (CSAT)

- First-response time

- Accuracy on product/policy questions

Governance focus:

- Hallucination detection (wrong product info)

- Escalation workflow (when to escalate)

- Training data freshness (product knowledge current?)

2. Hiring & Recruiting Agents

Purpose: Screen resumes, score candidates, schedule interviews

Characteristics:

- Critical impact (affects employment decisions)

- Regulatory scrutiny (bias, discrimination risk)

- Requires human approval (cannot fully automate hiring)

- Medium volume (50-500 candidates/day)

Key Metrics:

- Screening accuracy (do top candidates advance?)

- False negative rate (good candidates incorrectly rejected)

- Bias metrics (disparate impact analysis by demographics)

- Time-to-score

Governance focus:

- Bias & fairness (audit for discrimination)

- Explainability (can you explain rejection decision?)

- Human oversight (audit random decisions)

- Compliance (maintain audit trail for legal)

3. Content Moderation Agents

Purpose: Flag inappropriate content (spam, harassment, policy violations)

Characteristics:

- High volume (millions of items/day)

- Low accuracy acceptable (humans review flagged items)

- False positive cost (users frustrated if content wrongly removed)

- Confidence-based routing (high confidence → auto-remove; low confidence → human review)

Key Metrics:

- Precision (% of flagged items actually violate policy)

- Recall (% of violations caught)

- False positive rate

- Time-to-decision

Governance focus:

- Appeal mechanism (users can contest removal)

- Human review queue (backup when agent unsure)

- Policy drift (does moderation match company policy?)

4. Financial & Risk Assessment Agents

Purpose: Loan approval, credit scoring, fraud detection, investment decisions

Characteristics:

- Critical impact (financial/legal consequences)

- Regulatory compliance (must meet banking/insurance regulations)

- High accuracy required (>99% for some use cases)

- Explainability mandatory (regulatory requirement)

- Requires human sign-off (cannot fully automate)

Key Metrics:

- Accuracy (approval rate matches default rate of similar loans)

- Fairness metrics (no discrimination by protected classes)

- ROC-AUC (diagnostic ability)

- Precision (% approved loans actually good)

Governance focus:

- Regulatory compliance (audit for discrimination, explainability)

- Explainability (can you justify each decision?)

- Bias & fairness (regular fairness audits)

- Human review (sample approvals for QA)

5. Data & Insights Agents

Purpose: Analyze data; generate reports; answer analytics questions

Characteristics:

- Medium autonomy (humans verify numbers)

- Medium volume (hundreds of queries/day)

- Accuracy critical (wrong numbers affect decisions)

- Integration with data warehouse

Key Metrics:

- Accuracy (do generated reports match truth?)

- Latency (how long to answer query?)

- Coverage (% of queries agent can answer)

- User satisfaction

Governance focus:

- Data accuracy (agent doesn’t misread source data)

- Bias in analysis (are conclusions objective?)

- Data access control (agent respects permissions)

Viewing Agent Inventory

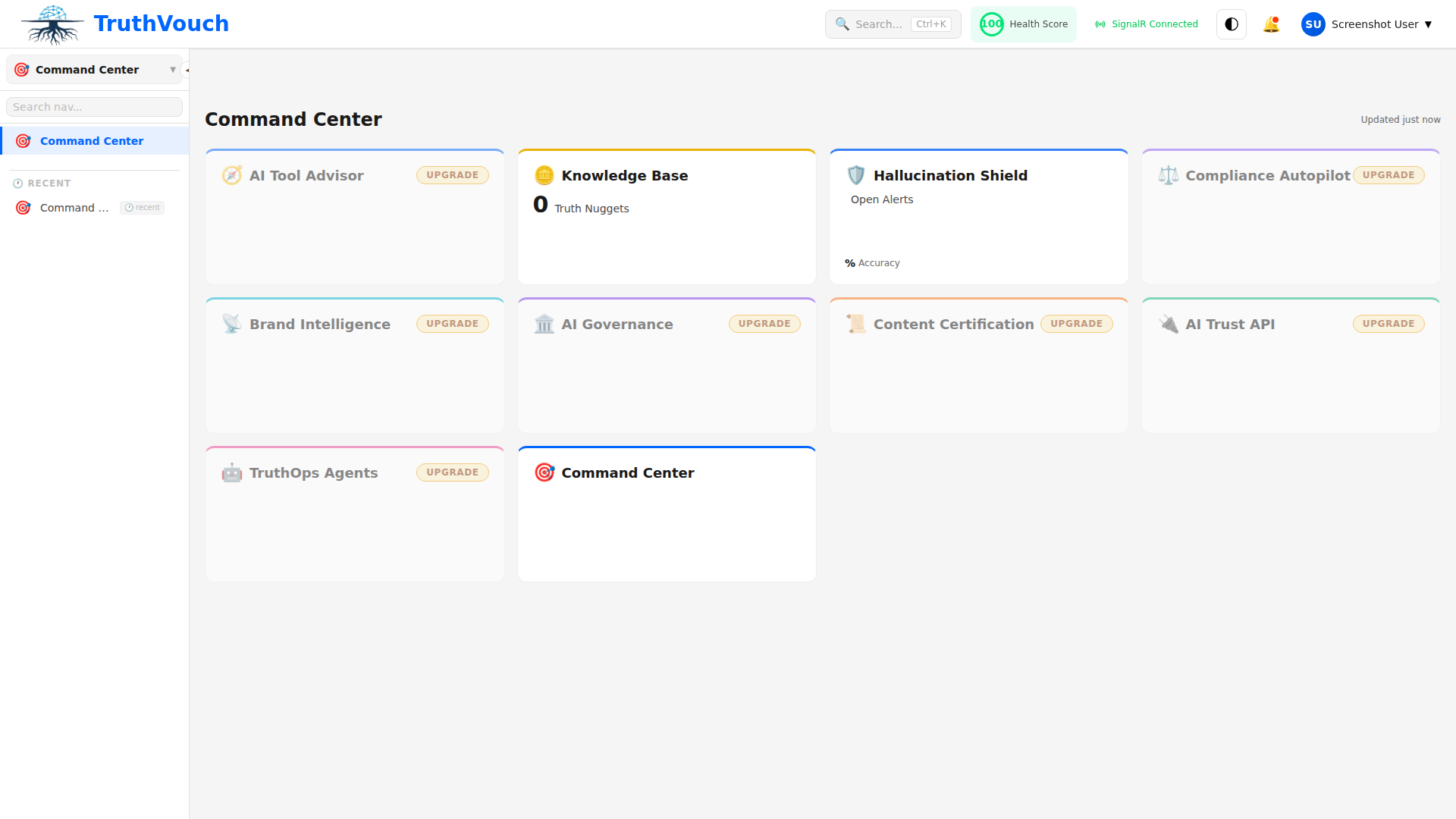

Path: TruthOps → Agents

List View

TruthVouch automatically displays all registered agents with key metrics:

| Name | Type | Owner | Risk | Status | Users | Accuracy | Uptime |

|---|---|---|---|---|---|---|---|

| Customer Support Bot | Service | John Smith | HIGH | Active | 50K | 87% | 99.8% |

| Resume Screener | Hiring | Sarah Chen | CRITICAL | Active | 200 | 92% | 99.5% |

| Content Moderation v3 | Moderation | Mike Davis | HIGH | Active | 1M | 78% | 99.9% |

Click any agent to see: Full metadata, configuration, performance metrics, recent alerts

Filter & Search

- By risk level: Critical, High, Medium, Low

- By type: Service, Hiring, Finance, Moderation, etc.

- By owner: All agents owned by specific person

- By status: Active, Paused, Archived

- By compliance scope: All agents handling PII, HIPAA data, etc.

- Search: Agent name or description

Agent Health Dashboard

TruthOps automatically monitors and displays health metrics for each active agent:

Availability:

- Uptime % (99.8%)

- Last incident (time, duration)

- Incident frequency (incidents/month)

Performance:

- Accuracy % (target: 95%, current: 87%)

- Response latency (target: <2s, actual: 1.5s)

- Throughput (queries/minute)

Cost:

- Daily cost (LLM API)

- Monthly forecast

- Cost trend (up/down month-over-month)

Alerts:

- Accuracy dropped below threshold

- Response time exceeded SLA

- Uptime fell below SLA

- Fallback triggered (agent failed; using backup)

Related Topics

- Autonomy Levels — Define agent autonomy and controls

- Configuration — Configure agent policies and rules

- Monitoring — Real-time agent health and performance

Next Steps

- Audit existing agents — What autonomous AI systems do you have?

- Register in TruthOps — Add each agent with metadata

- Classify by risk — Critical/High/Medium/Low

- Assign owners — Accountability for each agent

- Set performance targets — Accuracy, latency, uptime SLAs

- Monitor health — Track against targets