Autonomy Levels

Define the autonomy level of each AI agent — how much can it decide independently before requiring human approval? TruthOps automatically enforces controls, approval gates, and escalation policies by autonomy level.

Autonomy Levels

Agents operate at different autonomy levels depending on risk and impact:

| Level | Autonomy | Human Involvement | Best For | Examples |

|---|---|---|---|---|

| Level 5 | 100% | No human involvement | Low-risk, easily reversible | Summarization, recommendations |

| Level 4 | 80-90% | Human review after action | Medium-risk; human monitors | Content suggestions, email filtering |

| Level 3 | 50-70% | Human approval before action | High-risk items | Customer service escalation, data analysis |

| Level 2 | 20-50% | Human decides; AI assists | Critical decisions | Hiring screening (human decides), loan approval |

| Level 1 | <20% | Human in the loop | Informational only | AI provides info; human decides everything |

Level 5: Fully Autonomous (No Human Involvement)

Description: Agent makes decisions and takes actions without human oversight.

Governance rules:

- Low-risk decisions only (easily reversible if wrong)

- Error impact <$100 or equivalent

- User satisfaction not heavily affected by mistakes

- No regulatory constraints

- Agent accuracy >95% established over time

Examples:

- Email subject line summarization

- Product recommendation (user can ignore)

- Document tagging/categorization

- Timezone detection from location

Controls:

- Monitoring for accuracy drift

- Alert if accuracy drops below baseline

- Logging of all decisions (audit trail)

- User option to override (for most use cases)

Approval workflow:

Agent makes decision → Logs it → No human review(Monitoring system tracks accuracy)Level 4: Autonomous with Monitoring

Description: Agent makes decisions autonomously, but humans monitor for errors.

Governance rules:

- Low-to-medium risk

- Error impact $100-$1K or equivalent

- Reversible if problematic

- Easy to override or correct

- Agent accuracy >90%

Examples:

- Email filtering (filter to folder, user can see all mail)

- Chat routing (route to department, can be reassigned)

- FAQ matching (suggests answer, user can correct)

- Content categorization (auto-tags, can be edited)

Controls:

- Real-time accuracy monitoring

- Audit sampling (random review of decisions)

- User feedback mechanism (did we get it right?)

- Alert thresholds (notify if error rate >5%)

- Quick override capability

Approval workflow:

Agent makes decision → Logs it → Monitoring system tracks accuracy→ Alert if problems detected → Human investigates if neededLevel 3: Escalation-Based (Mixed Autonomy)

Description: Agent makes routine decisions; escalates complex/risky cases to humans.

Governance rules:

- Medium-to-high risk

- Agent handles clear cases (high confidence)

- Unclear/risky cases escalated to human

- Human approves or overrides decision

- Agent accuracy >85% on escalated cases (humans correct the rest)

Examples:

- Customer service (resolve common questions, escalate complex issues)

- Data classification (auto-tag obvious cases, route ambiguous to human)

- Fraud detection (flag high-confidence fraud, medium-confidence to analyst)

- Support ticket routing (obvious categories auto-routed, ambiguous to queue)

Controls:

- Escalation rules (when to escalate?)

- Confidence thresholds (low confidence → escalate)

- Escalation SLA (how long human takes to review?)

- Override tracking (how often human overrides agent?)

- Performance metrics (agent accuracy on non-escalated vs. escalated)

Approval workflow:

Agent decides → High confidence? → Action → Low confidence? → Escalate to human → Human approves/overridesExample rules:

IF agent confidence > 90% THEN execute decision immediatelyIF agent confidence 70-90% THEN escalate to supervisor (4-hour SLA)IF agent confidence < 70% THEN escalate to expert (8-hour SLA)Level 2: Human-Decides (AI Assists)

Description: Humans make the final decision; AI provides analysis/recommendation.

Governance rules:

- High-risk decisions (significant consequences)

- Regulatory requirement for human approval

- Significant financial, legal, or safety impact

- Explainability required (why did AI recommend this?)

- Human override always possible

Examples:

- Hiring decisions (AI scores candidates; human hires)

- Loan approval (AI assesses risk; loan officer approves)

- Medical diagnosis (AI suggests; doctor diagnoses)

- Legal discovery (AI flags relevant documents; lawyer reviews)

Controls:

- Explainability (agent must explain reasoning)

- Audit trail (document human decision + rationale)

- Bias monitoring (fairness metrics tracked)

- Approval SLA (how long for human review?)

- Appeal process (challenged decisions reviewed)

Approval workflow:

Agent analyzes → Provides recommendation + explanation → Human reviews→ Human approves / overrides / escalatesExample:

Hiring Agent Output: Candidate: John Smith Score: 78/100 (Recommend: Pass to interviews) Reasoning: - Education: Strong match (Stanford, CS degree) - Experience: 5 years relevant (above 3-year requirement) - Culture fit: Moderate (needs verification) Concerns: - Gap in employment 2022-2023 (not explained) - Limited leadership experience (prefer > 2 years)

Human Decision: Pass to technical interview (concerns noted; worth talking to candidate)Level 1: Informational (Human in the Loop)

Description: Agent provides information; humans make all decisions.

Governance rules:

- Critical decisions only

- High regulatory scrutiny

- No autonomy; agent is purely advisory

- Human accountability for decisions

- Full explainability required

Examples:

- Compliance advice (agent provides guidance; lawyer decides)

- Strategic recommendations (AI analyzes; executive decides)

- Risk assessment (AI highlights risks; manager decides)

- Data insights (AI generates reports; analyst interprets)

Controls:

- No automation (agent can’t execute)

- Explainability required (human needs to understand AI reasoning)

- Source citations (where did this info come from?)

- Confidence/uncertainty (what’s AI confident/uncertain about?)

- Override tracking (human decision always logged)

Approval workflow:

Agent provides information → Human reads/understands → Human decides→ Human executes decision (agent not involved)Setting Autonomy Levels

Step 1: Assess Risk

For each agent, evaluate:

Impact if wrong:

- Financial impact ($)

- User satisfaction impact (how many affected? how frustrated?)

- Regulatory/legal risk

- Brand risk

- Safety risk

Likelihood of error:

- Agent accuracy (from testing)

- Historical error rate

- Confidence in agent capability

Reversibility:

- Can error be easily fixed?

- Cost to fix?

- Time to fix?

Step 2: Choose Level

Use this guide:

Impact < $100 AND reversible → Level 5 (fully autonomous)Impact $100-1K AND reversible → Level 4 (autonomous + monitoring)Impact $1K-10K AND semi-reversible → Level 3 (escalation)Impact > $10K OR regulatory → Level 2 (human decides)Critical OR highly regulated → Level 1 (informational only)Step 3: Implement Controls

Once level chosen, configure controls:

Level 5: Monitoring + logging + audit trail

Level 4: Real-time monitoring + accuracy tracking + alerts + sampling

Level 3: Escalation rules + SLA + confidence thresholds + override tracking

Level 2: Explainability requirement + approval workflow + audit trail + appeals

Level 1: Info-only interface + explainability + source citation + decision logging

Changing Autonomy Levels

Increasing Autonomy

When: Agent proves reliability over time

Example: Agent starts Level 2 (human approves); after 6 months at >98% accuracy with no issues → move to Level 3 (escalation only)

Process:

- Demonstrate sustained accuracy (3-6 months)

- Get stakeholder approval (business + compliance)

- Define escalation rules

- Pilot on subset of decisions (10-20%)

- Monitor for 1 week

- Roll out fully if successful

Decreasing Autonomy

When: Agent performance degrades or new risk emerges

Example: Agent accuracy drops to 85% (below 90% target) → temporarily move from Level 4 to Level 3 (escalate low-confidence cases) until fixed

Process:

- Detect degradation (automated alert)

- Assess root cause (data drift? model version? input change?)

- Reduce autonomy immediately (safety first)

- Investigate and fix root cause

- Re-test before restoring autonomy

Autonomy Controls

Confidence Thresholds

Agent makes decision only if confidence ≥ threshold:

Level 5: No threshold (all decisions executed)Level 4: Threshold 80% (monitor accuracy)Level 3: Threshold 70% (escalate if below)Level 2: No threshold (human reviews all; AI assists)Approval Gates

Humans must approve before action:

Level 4: Approval sample (random 5-10% reviewed)Level 3: Approval conditional (only escalated decisions)Level 2: All decisions require approvalEscalation Rules

Define when to escalate to human:

IF confidence < threshold THEN escalateIF category = "sensitive" THEN escalateIF error_rate > 0.05 THEN escalateIF user_override_requested THEN escalateAudit & Appeal

Users/stakeholders can appeal decisions:

User: "I disagree with this decision"Appeal → Review by higher authority → Override if warrantedAll appeals logged; patterns analyzed for improvementsRelated Topics

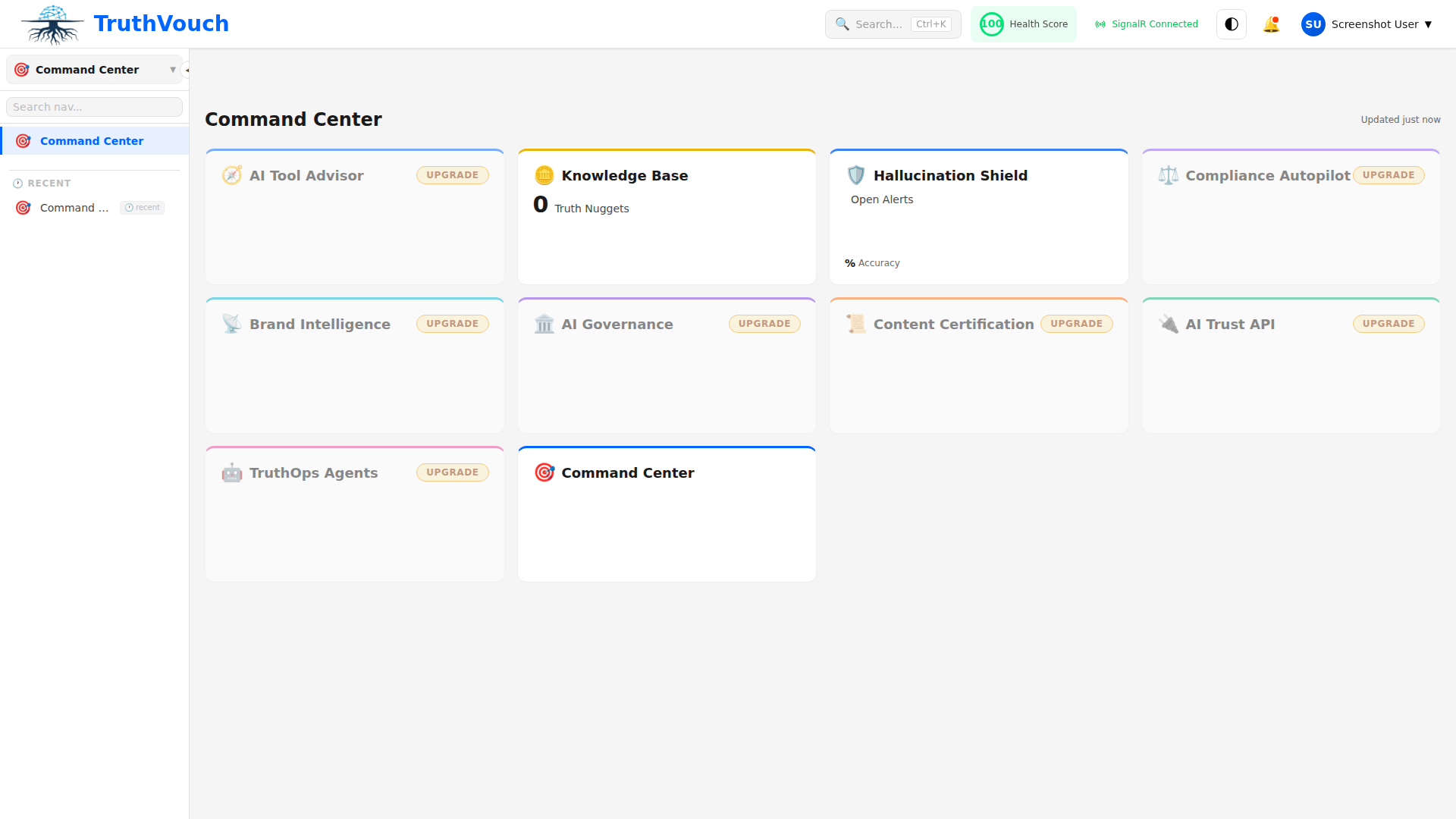

- Agent Inventory — Register and classify agents

- Configuration — Set up autonomy controls and policies

- Monitoring — Track agent health against autonomy level

Next Steps

- Audit current agents — What autonomy level are they at now?

- Assess appropriateness — Is the level right for the risk?

- Define controls — What controls needed for your level?

- Implement — Configure in TruthOps

- Monitor — Track performance and consider autonomy changes